Explore the Capabilities of Generative AI

Learning Objectives

After completing this unit, you’ll be able to:

- Describe qualities of generative AI models as compared to other models.

- Define key vocabulary of AI language models.

- Describe capabilities of generative AI that use language models.

Trailcast

If you'd like to listen to an audio recording of this module, please use the player below. When you’re finished listening to this recording, remember to come back to each unit, check out the resources, and complete the associated assessments.

Artificial Intelligence in the Spotlight

The media is abuzz with news about artificial intelligence (AI) as of late—it’s almost overwhelming. But why the huge spike in interest? AI isn’t exactly new; a lot of businesses and institutions have used AI in some capacity for years. The sudden attention to AI was arguably caused by something called ChatGPT, the first widely publicized AI-powered chatbot that could do what no others could.

The GPT in ChatGPT stands for Generative Pre-trained Transformer. GPTs are a type of AI model called a neural network that can respond to plain-language questions or requests, and those responses seem like they were written by a human. When ChatGPT and other GPTs like it were released to the public, people could experience firsthand what it was like to have a conversation with a computer. It was surprising. It was eerie. It was evocative. So of course people started paying attention!

[AI-generated image using DreamStudio at stability.ai with the prompt: “A happy robot sitting on a chair at a desk. On the desk is a laptop computer. Drawn in the style of 2D vector artwork.”]

An AI that can hold a natural, human-like conversation is clearly different from what we’ve seen in the past. As you learn in the Artificial Intelligence Fundamentals badge, there are a lot of specific tasks that AI models are trained to perform. For example, an AI model can be trained to use market data to predict the optimal selling price for a three-bedroom home. That’s impressive, but that model produces “just” a number. In contrast, some AI models can produce an incredible variety of text, images, and sounds that we’ve never read, seen, or heard before. This kind of AI is known as generative AI. It holds within it massive potential for change, both in and out of the workplace.

In this badge you learn what kinds of tasks generative AI models are trained to do, and some of the technology behind the training. This badge also explores how businesses are coalescing around specialties in the generative AI ecosystem. Finally, we end by discussing some of the concerns that businesses have about generative AI.

Possibilities of Language Models

Generative AI might seem like this hot new thing, but in reality researchers have been training generative AI models for decades. Some have even made the news in the past few years. Maybe you remember articles from 2018 when a company named Nvidia unveiled an AI model that could produce random photorealistic images of human faces. The pictures were surprisingly convincing. And although they weren’t perfect, they were definitely a conversation-starter. Generative AI was slowly beginning to enter the public consciousness.

As researchers worked on AI that could make specific kinds of images, others were focused on AI related to language. They were training AI models to perform all sorts of tasks that involved interpreting text. For example, you might want to categorize reviews of one of your products as positive, negative, or neutral. That’s a task that requires an understanding of how words are combined in everyday use, and it’s a great example of what experts call natural language processing (NLP). Because there are so many ways to “process” language, NLP describes a broad category of AI. (For more on NLP, see the Natural Language Processing Basics badge.)

Some AIs that perform NLP are trained on huge amounts of data, which in this case means samples of text written by real people. The internet, with its billions of web pages, is a great source of sample data. Because these AI models are trained on such massive amounts of data, they’re known as large language models (LLMs). LLMs capture, in incredible detail, the language rules humans take years to learn. These large language models make it possible to do some incredibly advanced language-related tasks.

Summarization

If you’re given a sentence and you understand how all the words come together to make a point, you can probably rewrite the sentence to express the same idea. While AI models don’t know syntax, they learn to identify and apply syntactic patterns from the huge amounts of data they’re trained on. This allows them to know which words can be swapped for others and remix sentences similar to how a human might. Taking a whole paragraph and condensing into one or two sentences is just another kind of remix. This kind of AI-assisted summarization can be very helpful in the real world. It can create meeting notes from an hour-long recording. Or write an abstract of a scientific paper. It’s the ultimate elevator-pitch generator.

Translation

LLMs are like a collection of rules for how a language structures words into ideas. Each language has its own rules. In English, we typically put adjectives before nouns, but in French it’s usually the other way around. AI translators are trained to learn both sets of rules. So when it’s time for a sentence remix, AI can use a second set of rules to express the same idea. Voilà, you have yourself a great translation. And programming languages are languages too. They have their own set of rules, so AI can translate a loose set of instructions into actual code. A personal pocket programmer can open a lot of doors for a lot of people.

Error Correction

Even the most experienced writers make the occasional grammatical or spelling mistake. Now, AI will detect (and sometimes auto-correct) anything amiss. Also, patching up errors is important when simply listening to someone speak. You might miss a word or two because you’re in a noisy environment, but you use context to fill in the gap. AI can do this too, making speech-to-text tasks like closed captioning even more accurate.

Question Answering

This is the task that launched generative AI into the limelight. AIs such as GPTs are capable of interpreting the intention of a question or request. Then, they can generate a large amount of text based on the request. For example, you could ask a GPT for a one-sentence summary of the three most popular works of William Shakespeare, and you’d get:

"Romeo and Juliet" - A tragic tale of two young lovers from feuding families whose love ultimately leads to their untimely deaths.

"Hamlet" - The story of a prince haunted by his father’s ghost, grappling with revenge and the existential questions of life and death.

"Macbeth" - A chilling drama of ambition and moral decline as a nobleman, driven by his wife’s ambition, succumbs to a bloody path of murder to seize the throne.

Then, you could continue the conversation by asking for more information about Hamlet, as though you were talking with your Language Arts teacher. This kind of interaction is a great example of getting just-in-time information with a simple request.

Guided Image Generation

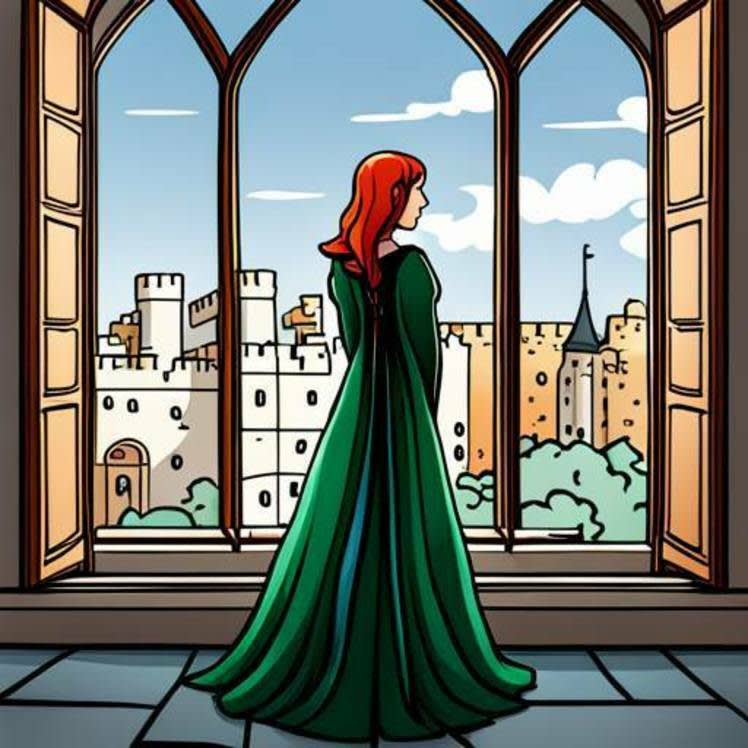

LLMs can be used in tandem with image generation models so that you can describe the image you want, and AI will attempt to make it for you. Here’s an example of asking for “a 2D line art drawing of Juliet standing at the window of an old castle.” Because there are so many descriptions and images of Romeo & Juliet on the internet, the AI generator didn’t need any further information to make a guess at an appropriate image.

[AI-generated image using DreamStudio at stability.ai with the prompt, “A 2D line art drawing of Juliet standing at the window of an old castle.”]

Related to guided image generation, some AI models can add new content into existing images. For example, you could extend the borders of a picture, allowing the AI to draw in what is likely to appear based on the context of the original picture.

Text-to-Speech

Similar to how AI can convert a string of words into a picture, there are AI models that can convert text to speech. Some models can analyze audio samples of a person speaking. It learns that person’s unique speech patterns, and can reproduce them when converting text to new audio. To the casual listener, it’s hard to tell the difference.

These are just a few examples of how LLMs are used to create new text, images, and sounds. Almost any task that relies on an understanding of how language works can be augmented by an AI. It’s an incredibly powerful tool that you can use for both work and play.

Impressive Predictions

Now that you have an idea of what generative AI is capable of, it’s important to make something very clear. The text that a generative AI generates is really just another form of prediction. But instead of predicting the value of a home, it predicts a sequence of words that are likely to have meaning and relevance to the reader.

The predictions are impressive, to be sure, but they are not a sign that the computer is “thinking.” It doesn’t have an opinion about the topic you ask about, nor does it have intentions or desires of its own. If it ever sounds like it has an opinion, that’s because it’s making the best prediction of what you expect as a response. For example, asking someone “Do you prefer coffee or tea?” elicits a certain kind of expected response. A well-trained model can predict a response, even if it doesn’t make sense for a computer to want any kind of drink.

In the next unit, you learn about some of the technology that makes generative AI possible.

Resources

- Trailhead: Artificial Intelligence Fundamentals

- Trailhead: Natural Language Processing Basics

- Salesforce Help: About Einstein Generative AI